home

> blog

>> 751

| [previous] / [up] | [overview] | [down] / [next] |

Sat May 05 16:09:16 2012 GMT: Cologne

Sun Apr 29 16:08:27 2012 GMT: EPOLLOUT|EPOLLET Behaviour

Sun Apr 22 19:18:47 2012 GMT: Design and Analysis of Algorithms I

Just completed the final exam for Design and Analysis of Algorithms I (by Tim Roughgarden of Stanford University). I have to admit that I slightly messed up on the final exam and only got 27 out of 30 - but as I got the quizzes and programming assignments during the course right, my total score should still be quite reasonable. Let's wait for the certificate of accomplishment...

Sat Apr 21 19:24:24 2012 GMT: boost asio thread contention

Looking a bit more closely at what happens behind the scenes for boost asio where you have more worker threads than work to do (i.e. one socketpair with 4 worker threads from my previous boost asio example) we can see some interesting thread contention via strace:

11687 sendmsg(7, {msg_name(0)=NULL, msg_iov(1)=[{"\7\0\0\0", 4}], msg_controllen=0, msg_flags=0}, MSG_NOSIGNAL <unfinished ...>

11689 <... epoll_wait resumed> {{EPOLLIN, {u32=36459312, u64=36459312}}}, 128, 4294967295) = 1

11687 <... sendmsg resumed> ) = 4

11689 futex(0x7f82a6211dd4, FUTEX_WAKE_OP_PRIVATE, 1, 1, 0x7f82a6211dd0, {FUTEX_OP_SET, 0, FUTEX_OP_CMP_GT, 1} <unfinished ...>

11687 futex(0x7f82af213dd4, FUTEX_WAKE_OP_PRIVATE, 1, 1, 0x7f82af213dd0, {FUTEX_OP_SET, 0, FUTEX_OP_CMP_GT, 1} <unfinished ...>

11690 <... futex resumed> ) = 0

11689 <... futex resumed> ) = 1

11690 futex(0x22c5150, FUTEX_WAKE_PRIVATE, 1 <unfinished ...>

11689 recvmsg(6, <unfinished ...>

11690 <... futex resumed> ) = 0

11689 <... recvmsg resumed> {msg_name(0)=NULL, msg_iov(1)=[{"\7\0\0\0", 4}], msg_controllen=0, msg_flags=0}, 0) = 4

11690 epoll_wait(4, <unfinished ...>

11689 recvmsg(6, <unfinished ...>

11690 <... epoll_wait resumed> {}, 128, 0) = 0

11689 <... recvmsg resumed> 0x7f82aea12370, 0) = -1 EAGAIN (Resource temporarily unavailable)

11690 epoll_wait(4, <unfinished ...>

11689 sendmsg(6, {msg_name(0)=NULL, msg_iov(1)=[{"\10\0\0\0", 4}], msg_controllen=0, msg_flags=0}, MSG_NOSIGNAL <unfinished ...>

What's interesting to see is that there are 3 threads involved with significant locking (via futex) between those threads.

For comparison, the equivalent section from the strace for asyncsrv.cc only shows 2 active threads and no lock contention at all:

11711 sendto(7, "\7\0\0\0", 4, 0, NULL, 0) = 4

11710 <... epoll_wait resumed> {{EPOLLIN, {u32=7674112, u64=7674112}}}, 16, 4294967295) = 1

11711 epoll_wait(3, <unfinished ...>

11710 recvfrom(6, "\7\0\0\0", 4, 0, NULL, NULL) = 4

11710 recvfrom(6, 0x7518e0, 4, 0, 0, 0) = -1 EAGAIN (Resource temporarily unavailable)

11710 sendto(6, "\10\0\0\0", 4, 0, NULL, 0 <unfinished ...>

That's definitely what you would expect.

BTW, I did apply a crude fix for the spurious EPOLLOUT events to boost asio to not get distracted from those.

Sat Apr 21 14:51:42 2012 GMT: Spurious EPOLLOUT events

One thing I noticed when looking at epoll scalability was that Linux seems to generate lots of spurious EPOLLOUT events (even when using EPOLLET - edge-triggered). To illustrate the issue, have a look at eptest-out.cc. This clearly shows that Linux generates an EPOLLOUT event for each send syscall even though there is no need as the state hasn't changed.

BTW, the strace output also clearly shows those events:

sendto(4, "\7\0\0\0", 4, 0, NULL, 0) = 4

epoll_wait(3, {{EPOLLOUT, {u32=4, u64=4}}, {EPOLLIN|EPOLLOUT, {u32=5, u64=5}}}, 16, 4294967295) = 2

recvfrom(5, "\7\0\0\0", 4096, 0, NULL, NULL) = 4

recvfrom(5, 0x7fffa14e1000, 4096, 0, 0, 0) = -1 EAGAIN (Resource temporarily unavailable)

sendto(4, "\10\0\0\0", 4, 0, NULL, 0) = 4

epoll_wait(3, {{EPOLLOUT, {u32=4, u64=4}}, {EPOLLIN|EPOLLOUT, {u32=5, u64=5}}}, 16, 4294967295) = 2

recvfrom(5, "\10\0\0\0", 4096, 0, NULL, NULL) = 4

recvfrom(5, 0x7fffa14e1000, 4096, 0, 0, 0) = -1 EAGAIN (Resource temporarily unavailable)

Note that in this case the EPOLLOUT event on the sending socket always coincides with an EPOLLIN|EPOLLOUT event on the receiving socket, so you don't get an extra wakeup, but you aren't always that lucky...

Sun Apr 01 11:03:28 2012 GMT: Run, Christof, Run

Just couldn't stop running this morning and ended up doing 7 laps around Battersea Park - Google Maps reckons it's about 3.3 km per lap - which would mean around 23 km in total.

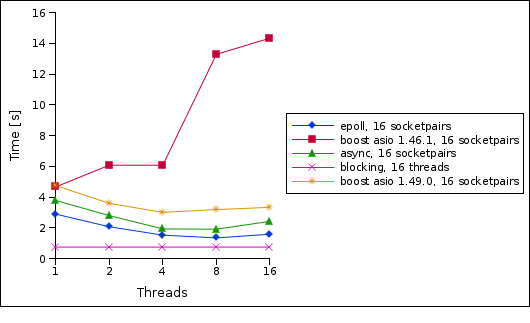

Thu Mar 29 20:43:00 2012 GMT: boost asio 1.46.1 vs. boost asio 1.49.0

I have now re-run my socket communication tests with Boost 1.49.0 which shows a huge improvement in the 16 socketpairs case where we actually have some real concurrency:

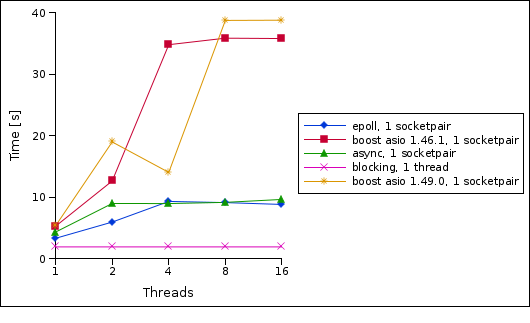

But the 1 socketpair case still shows some rather bad performance when using multiple worker threads:

Stay tuned...

Tue Mar 27 18:19:40 2012 GMT: Opera 11.62

Sun Mar 25 00:31:10 2012 GMT: Overhead and Scalability of I/O Strategies

Sat Mar 24 23:28:16 2012 GMT: Romeo and Juliet @ Royal Opera House

Wed Mar 21 22:08:09 2012 GMT: kevent on FreeBSD (Update)

Sun Mar 18 20:55:50 2012 GMT: Multi-threaded epoll/kqueue